$200 Million Lost in 90 Days: The AI Scam Crisis of 2025

Two hundred million dollars. That’s the documented financial loss from deepfake-enabled fraud in the first three months of 2025 - and that only counts reported cases. The real number is almost certainly far higher.

The statistics coming out of 2025 paint a picture of a fraud landscape that has fundamentally shifted. This isn’t an incremental increase in traditional scams. It’s a step-change driven by AI tools that have become simultaneously more powerful and more accessible.

The numbers

- 442% surge in voice phishing (vishing) attacks from the first half to the second half of 2024, according to Pcdn research. The trajectory has only steepened in 2025.

- 1,500% increase in deepfake incidents since 2023.

- $40 billion in projected global losses from deepfake-enabled scams by 2027.

- 85% accuracy - how well modern AI can clone a person’s voice from just 3–5 seconds of audio.

- 68% of video deepfakes can’t be distinguished from real footage by viewers.

- 10%+ of banks surveyed have each lost more than $1 million to a deepfake call.

- 194% surge in deepfake scams across Asia-Pacific in 2024.

- One in three people who reported fraud in 2025 said they actually lost money, up from one in four the previous year. The scams aren’t just more frequent; they’re more effective.

What’s changed

Three things converged to create this crisis.

The Tools Improved. Voice synthesis crossed a critical threshold. Modern text-to-speech systems can clone a person’s voice from a few seconds of audio, capturing pitch, accent, pacing, emotional tone, and subtle speech quirks. On the video side, diffusion models and transformer architectures have eliminated most of the artefacts - unnatural eye movements, lagging facial muscles, inconsistent lighting - that once made deepfakes easy to spot.

The Tools Became Accessible. You don’t need technical expertise or expensive equipment to create a convincing deepfake. Arup’s CIO made a deepfake video of himself in 45 minutes using free software. Voice cloning tools are openly available and require no special skills. The FBI’s IC3 has noted that even low-sophistication criminals are now deploying AI in their scams.

The Tools Became Cheap. The economics of fraud have shifted dramatically. What once required a skilled social engineer spending hours researching and rehearsing can now be partially automated. Scammers are even using specialised tools - researchers have identified something called “FraudGPT” - purpose-built for criminal use.

Who’s Getting Hit

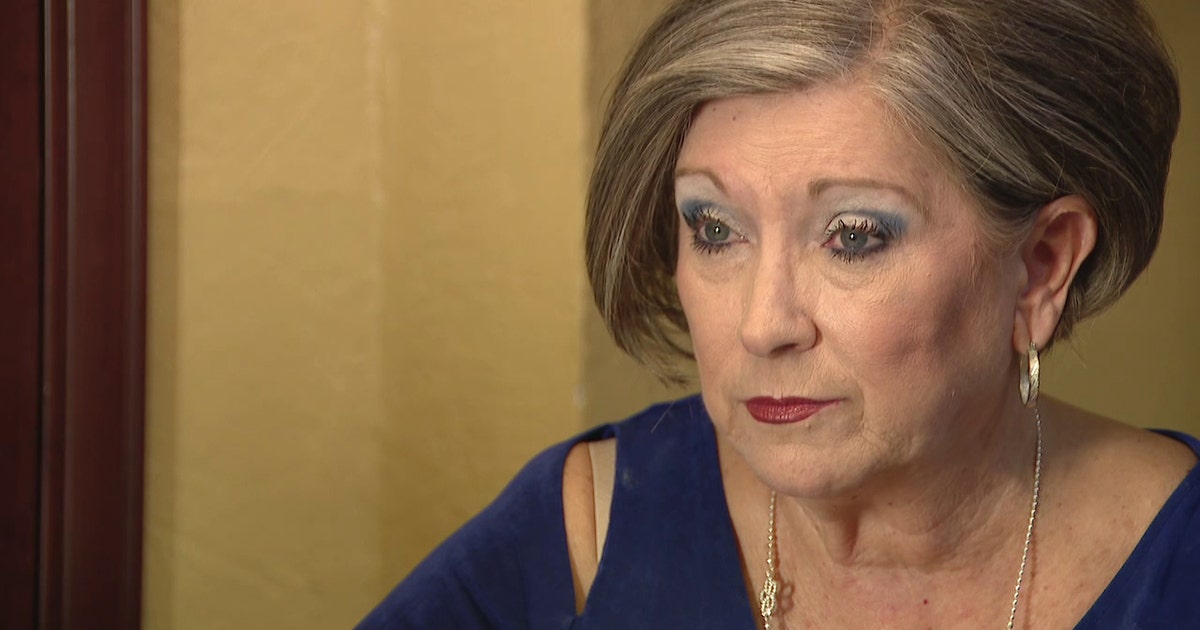

Families are being targeted through grandparent scams and kidnapping hoaxes. A mother in Florida lost $15,000 to an AI clone of her daughter’s voice. An elderly couple in Alabama was targeted using their great-grandson’s cloned voice. These attacks exploit the most powerful human emotion - fear for a loved one’s safety.

Businesses are being targeted through CEO fraud and business email compromise. Arup lost $25 million to a deepfake video call. WPP’s CEO was targeted with a cloned voice. A UK energy firm lost €220,000 to a fake CEO phone call. These attacks exploit organisational authority structures and the natural tendency to comply with instructions from senior leadership.

Investors are being targeted through celebrity endorsement scams. Deepfake videos of Elon Musk and other public figures promoting fraudulent crypto schemes spread across YouTube and X, costing victims thousands each.

Everyone is being targeted through increasingly sophisticated phishing. AI-generated scam websites numbered in the hundreds of thousands in 2025. Personalised voice phishing can now be deployed at scale.

The advice is universal - and universally ignored

Read any expert analysis of deepfake fraud and you’ll find the same recommendation repeated, whether it comes from the FBI, the FTC, Europol, cybersecurity researchers, or post-incident forensic analysts:

“Create a secret word or phrase with your family members to verify their identities.” - FBI PSA, May 2025

It’s excellent advice. It addresses the core vulnerability: our tendency to trust what we see and hear. A code word doesn’t care how good the deepfake is.

But here’s the uncomfortable truth: almost nobody actually does this. Families talk about it and never follow through. Businesses add it to a security policy that nobody reads. Even when people set up a code word, they forget it, or it stays the same for years, or it gets shared too widely to be useful.

The gap between the recommendation and reality is where the damage happens.

Closing the gap

TrustWord exists to close that gap. It takes the universally recommended “code word” approach and implements it the way it should work: automatically rotating, cryptographically generated, unique to each pair of people, protected by biometrics, and available offline.

No code word to remember. No shared secret to manage. No internet required after setup. Every pair of people in your circle has their own unique phrase that changes every 2.5 minutes.

It’s the advice every expert gives, turned into an app that actually works.