“Mawmaw, I’m in a lot of pain.”

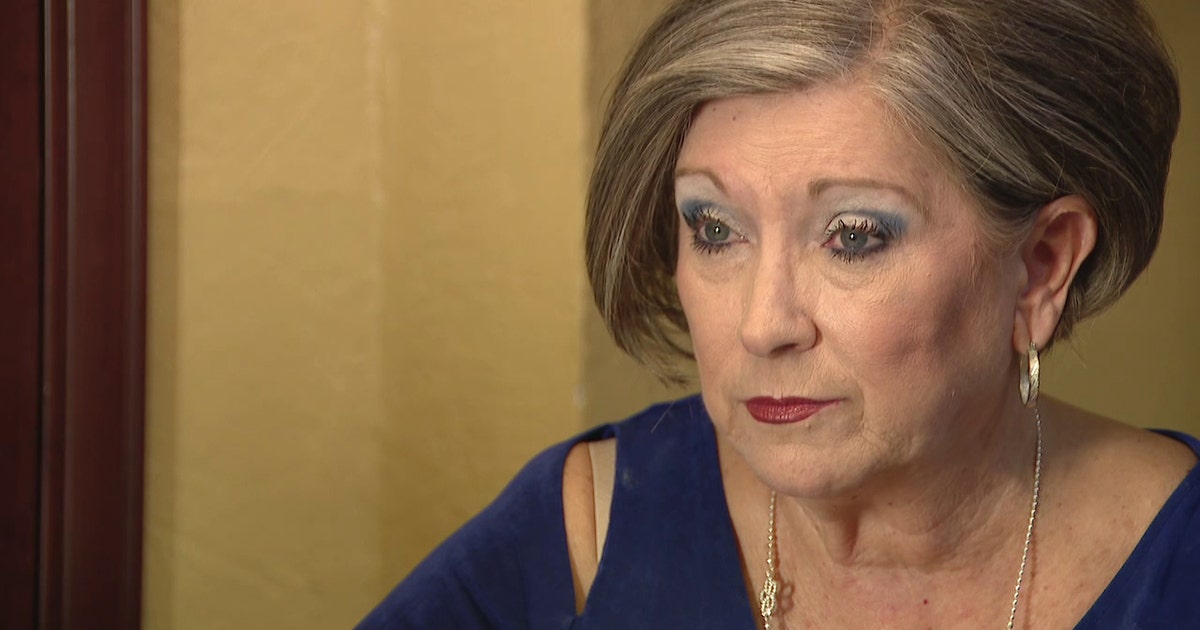

When Alice Boren’s phone rang, she immediately recognised her great-grandson Cameron’s voice. He was hurt. A broken nose, bleeding, and being taken to jail after a car accident. He only had a few minutes to talk.

It wasn’t Cameron. It was an AI-generated clone of his voice, built from audio scraped off social media.

Alice’s story is one of thousands. The “grandparent scam” has existed for decades - a caller pretends to be a grandchild in trouble and begs for money. But until recently, the voice was always obviously wrong. Grandparents went along because of panic and emotion, not because the voice was convincing.

AI has changed that completely. Now, the voice on the phone is convincing. Perfectly convincing. And the results are devastating.

The calls that break your heart - and drains your savings

Sharon Brightwell, Florida. Her “daughter” called crying, saying she’d been in a car accident and lost her unborn baby. She needed $15,000 immediately to avoid criminal charges. Sharon rushed to withdraw cash and handed it to a courier who came to her door. Only after reaching her real daughter - who was safe and had no idea - did Sharon realise she’d been scammed.

Jennifer DeStefano, Arizona. She heard her daughter’s panicked voice begging for help, claiming she’d been kidnapped. The “kidnappers” demanded $1 million. Jennifer was able to verify her daughter was safe before paying - but later described the voice as indistinguishable from her real daughter.

An elderly couple in Alabama nearly lost their savings after an AI voice mimicking their grandson described a violent car accident and arrest. The voice included crying, fear, and pain - all generated by AI.

These attacks work because they exploit the most powerful force in human psychology: a parent or grandparent’s love for their child. When you hear someone you love in distress, rational thinking shuts down. You don’t analyse the voice for imperfections. You act.

Scammers know this. They engineer maximum emotional pressure, combined with urgency (“don’t tell anyone, there’s no time”) and secrecy (“don’t call Mum, she’ll panic”). By the time a victim thinks to verify, the money is gone.

How scammers clone your family’s voice

The process is disturbingly simple. Scammers look for video or audio of their target on social media - a Facebook video, an Instagram story, a TikTok clip, even a voicemail greeting. Modern AI needs as little as 3–5 seconds of clean audio to produce a convincing clone.

They pair this with basic research: names, relationships, locations, recent events - all publicly available on social media profiles. Then they call, armed with a synthetic voice and enough personal context to be convincing.

The FBI has issued specific alerts about this technique, recommending families “create a secret word or phrase with your family members to verify their identities.” The FTC receives thousands of reports annually and warns consumers not to trust a voice just because it sounds familiar. And every single one of them gives the same advice:

Establish a secret code word with your family.

The code word everyone recommends but nobody sets up

It’s genuinely good advice. If your “grandchild” calls in distress, ask for the family code word. If they can’t give it, hang up and call them directly.

But here’s the problem: most families never get around to choosing one. Even when they do, people forget it. Or it never changes, so someone might overhear it. Or the grandparent has a different code word with each grandchild and can’t remember which is which.

The concept is sound. The execution is the hard part.

TrustWord makes the code word actually work

TrustWord is a simple app that does exactly what the experts recommend - but makes it foolproof.

When you set up a circle (say, “Family”), every pair of people gets their own unique passphrase, generated on-device using the same cryptographic approach as authenticator apps. The phrases rotate every 2.5 minutes. No internet needed to check them.

Here’s what happens when a suspicious call comes in:

Call: “Gran, I’ve been in an accident, I need help-”

You: “Oh no! What’s your TrustWord for me, love?”

Scammer: Can’t answer.

Hang up. Call your grandchild’s real number.

No code word to remember. No shared secret that could be overheard. Each pair of people has different phrases. They change automatically. And the app is protected by Face ID or fingerprint, so even if someone has your phone, they can’t see your words.

Set it up this weekend

TrustWord takes less than a minute to set up. Create a circle, have your family members scan a QR code, and you’re done. Next time you or anyone in your family gets a suspicious call, you have an instant, foolproof way to verify.

It’s free for up to 10 people - more than enough for most families. No accounts to create, no email addresses to enter, no personal information collected at all.

Your family’s safety shouldn’t depend on remembering a code word you set up three years ago. It should be as easy as glancing at your phone.