Your mother’s voice isn’t proof it’s your mother.

In 2025, scammers can clone anyone’s voice from just a few seconds of audio scraped from social media, voicemail greetings, or public videos. The result is a phone call that sounds exactly like someone you trust - your parent, your child, your boss - saying whatever the scammer wants them to say.

This isn’t a future threat. It’s happening right now, thousands of times a day.

The numbers are staggering

Voice phishing attacks surged 442% in 2024. Global losses from deepfake-enabled fraud exceeded $200 million in just the first three months of 2025 - and that only counts reported cases. By 2027, researchers project $40 billion in total losses from AI-powered scams.

The barrier to entry has collapsed. Modern AI can clone a person’s voice with 85% accuracy using just 3–5 seconds of clean audio. Scammers harvest voice samples from Facebook videos, Instagram stories, TikTok clips, YouTube content, and even voicemail greetings. Once they have your voice print, they can make you “say” anything in a real-time phone call - complete with emotion, hesitation, and crying.

How a voice cloning scam works

The playbook is remarkably consistent:

Step 1: Harvest. Scammers scrape audio of their target from social media, public recordings, or even a brief phone call where they get you to say a few sentences.

Step 2: Clone. Using freely available AI tools, they generate a synthetic version of the target’s voice. This takes minutes, not hours.

Step 3: Call. They phone the victim - usually a family member - using the cloned voice. The “family member” is in distress: a car accident, an arrest, a kidnapping. They need money immediately.

Step 4: Pressure. The scammer creates urgency. Don’t tell anyone. Don’t hang up. Send cash, gift cards, or wire a transfer right now. Every second counts.

Step 5: Disappear. Once the money moves, it’s gone. Victims discover the truth only when they reach their real family member - who was perfectly safe the whole time.

Real victims, real losses

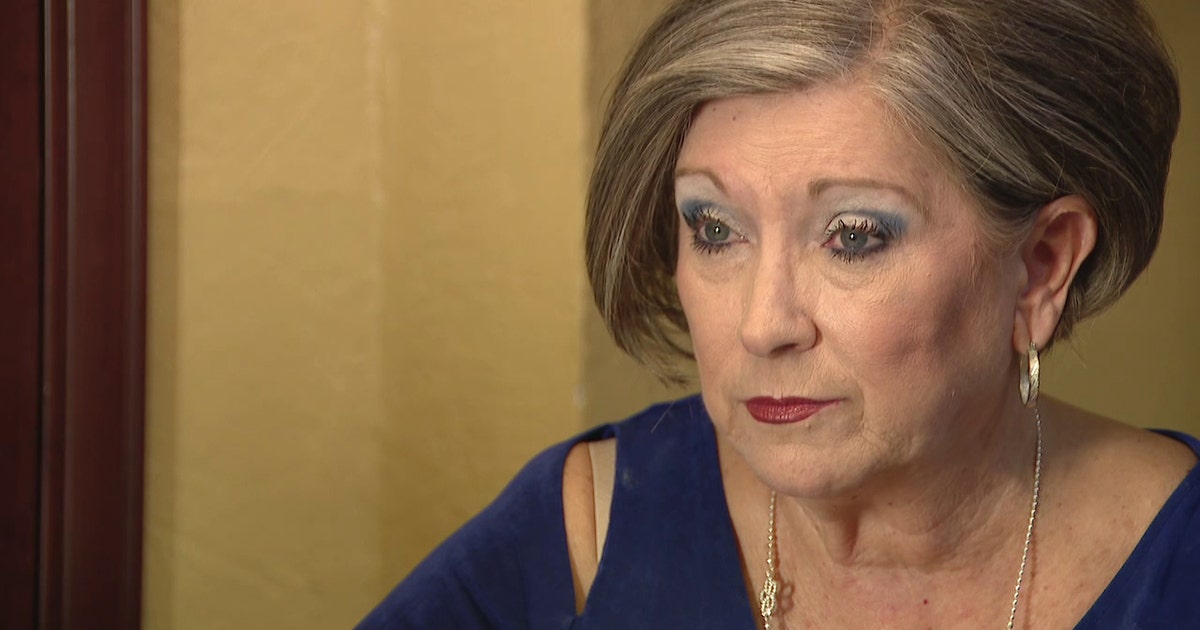

Sharon Brightwell, Florida - $15,000 lost. In July 2025, Sharon received a call from her “daughter,” crying and distraught, claiming she’d been in a car accident and lost her unborn child. The voice begged for $15,000 to avoid criminal charges. Sharon sent the cash to a courier. She only discovered the deception after speaking to her real daughter, who was completely fine.

Alice and Frank Boren, Alabama - targeted. Alice answered the phone and immediately recognised her great-grandson Cameron’s voice. “Mawmaw, I’m in a lot of pain. I have a broken nose and I’m bleeding,” the voice said, claiming he’d been in a car wreck and was being taken to jail. The voice was AI-generated.

Jennifer DeStefano, Arizona - $1 million ransom demand. Jennifer heard the panicked voice of her daughter on the phone, claiming she’d been kidnapped and that kidnappers wanted $1 million. Jennifer was able to confirm her daughter was safe before paying, but many victims aren’t so lucky.

These aren’t isolated incidents. They’re part of a global wave that is accelerating every month.

What every expert recommends

The FBI, FTC, cybersecurity researchers, and fraud investigators all give the same advice: establish a secret code word or phrase with your family members that only you would know. On a suspicious call, ask for the code word. If they can’t provide it, hang up.

The FBI’s May 2025 Public Service Announcement is explicit: “Create a secret word or phrase with your family members to verify their identities.” The FTC has gone further, launching a Voice Cloning Challenge to encourage technical solutions to the problem.

It’s simple, effective advice. But in practice, most families never get around to setting one up. And even when they do, a static code word has problems: it never changes, someone might forget it, or it could be overheard or shared accidentally.

TrustWord: the code word, done right

TrustWord takes the universally recommended “family code word” concept and makes it actually work in the real world.

Instead of trying to remember a single static phrase, TrustWord gives every pair of people in your circle a unique, rotating passphrase that changes every 2.5 minutes. The phrases are generated cryptographically on your device - no server ever sees them. No internet connection is needed to verify.

Here’s what it looks like in practice:

Suspicious call: “Mum, I’ve been in an accident, I need money-”

You: “What’s your TrustWord for me?”

If it’s really them: They open the app, read their word, you check it matches your screen. Then they ask for yours. Both sides verified. Crisis confirmed - you can help with confidence.

If it’s a scammer: They can’t answer. Scam detected. Hang up.

Two words, two directions, mutual verification. A scammer can’t fake both sides.

Free for up to 10 people - enough for most families. No accounts, no email, no phone number, no data collection. Set up in under a minute.

TrustWord is available for iOS and Android. Your first 10 people are completely free.